Log Management and Analytics

powered by Grail

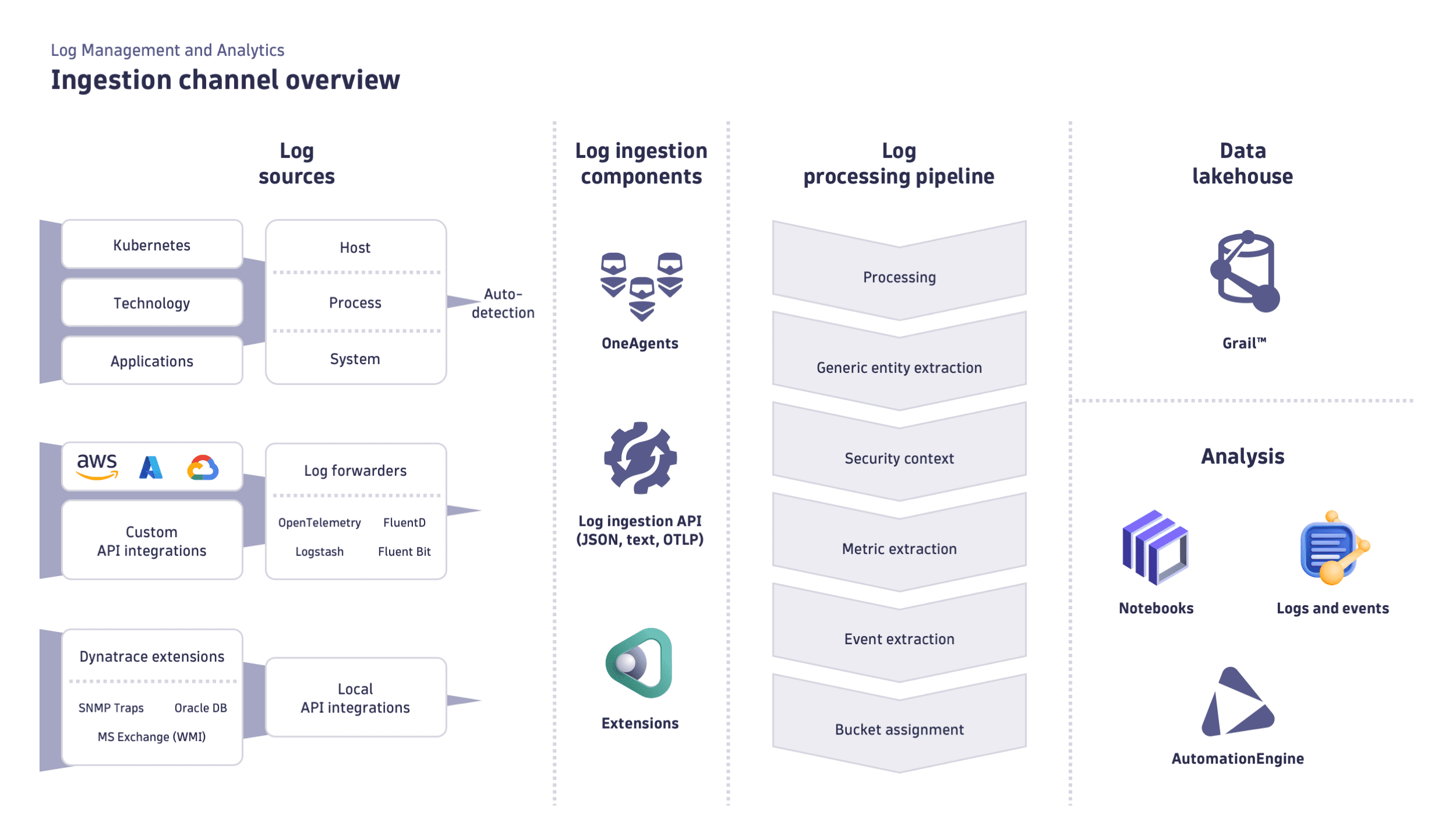

Log Management and Analytics, powered by Grail, provides a unified approach to unlocking the value of log data in the Dynatrace platform.

Hassle-free management of your log data lets you store petabytes of data without schemas, indexing, or rehydration. All of that data is usable at any time for any analytics task. Thanks to schema on-read and the Dynatrace Query Language, there's no need to decide what you want to query during data ingestion. Pick the retention period for your data that fits your business and compliance needs, whether debugging or audit.

After using OneAgent or the generic ingestion API to bring your log data into Dynatrace, you can observe the data in the context of your full stack, including real user impacts or traces. Dynatrace Log Management and Analytics allows you to automate your log tasks.

- Automatically see precise problem root causes in real time to simplify cloud complexity.

- Automate operations and trigger remediation workflows to enhance efficiency.

- Automatically ingest logs, metrics, and traces, with continuous dependency mapping and precise context across hybrid and multicloud environments.

DDU consumption

The DDU consumption model applies to cloud Log Management and Analytics. For details, see DDUs for Log Management and Analytics.

Availability and previous versions

Log Management and Analytics is the latest log offering available in Dynatrace SaaS with Grail.

Troubleshooting

If your ingested logs don’t look as expected, you can check if a particular log record contains warnings regarding issues that occurred for that log in the log ingest and processing pipeline. Look for a dt.ingest.warnings attribute in Notebooks. It lists warnings about issues that affected a particular log record.

Examples of possible warnings:

| Warning | Description |

|---|---|

content_trimmed | The content was trimmed after being received bythe API because it exceeded the event content maximum byte size limit. |

content_trimmed_pipe | The content was trimmed after processing rules were applied because it exceeded the event content maximum byte size limit. |

attr_count_trimmed | The number of attributes was trimmed after being received by the API because it exceeded the maximum number of attributes in a single event. |

attr_count_trimmed_pipe | The number of attributes was trimmed after processing rules were applied because it exceeded the maximum number of attributes in a single event. |

attr_val_count_trimmed | At least one multi-value attribute had the number of values trimmed, after being received by the API, because it exceeded the maximum number of attributes in a single event. |

attr_val_count_trimmed_pipe | After applying processing rules, at least one multi-value attribute had its value number trimmed because it exceeded the maximum number of attributes. |

attr_val_size_trimmed | At least one attribute value size was trimmed after being received by the API because it exceeded the value maximum byte size limit. |

attr_val_size_trimmed_pipe | At least one attribute value size was trimmed after processing rules were applied because it exceeded the value maximum byte size limit. |

timestamp_corrected | The timestamp was too far in the future and was corrected to the current time. |

common_attr_corrected | At least one of the following attributes was corrected: |

processing_batch_timeout | Batch timeout occurred while executing log processing rules. |

processing_transformer_timeout | Execution timeout occurred in one of the processing transformers while executing log processing rules. |

processing_transformer_error | Execution error occurred in one of the processing transformers while executing log processing rules. |

processing_transformer_throttled | Execution throttled in one of the processing transformers while executing log processing rules. |

processing_output_record_conversion_error | Output conversion error occurred for some records while executing log processing rules. |

processing_prepare_input_error | “Prepare input error” occurred in one of the enabled log processing rules. |