Log Monitoring Classic

With Log Monitoring as a part of the Dynatrace platform, you gain direct access to the log content of all your mission-critical applications, infrastructure and cloud platforms. You can create custom log metrics for smarter and faster troubleshooting. You will be able to understand log data in the context of your full stack, including real user impacts.

Log Monitoring Classic is available for SaaS and managed deployments. For the latest Dynatrace log offering on SaaS, consider upgrading to Log Management and Analytics.

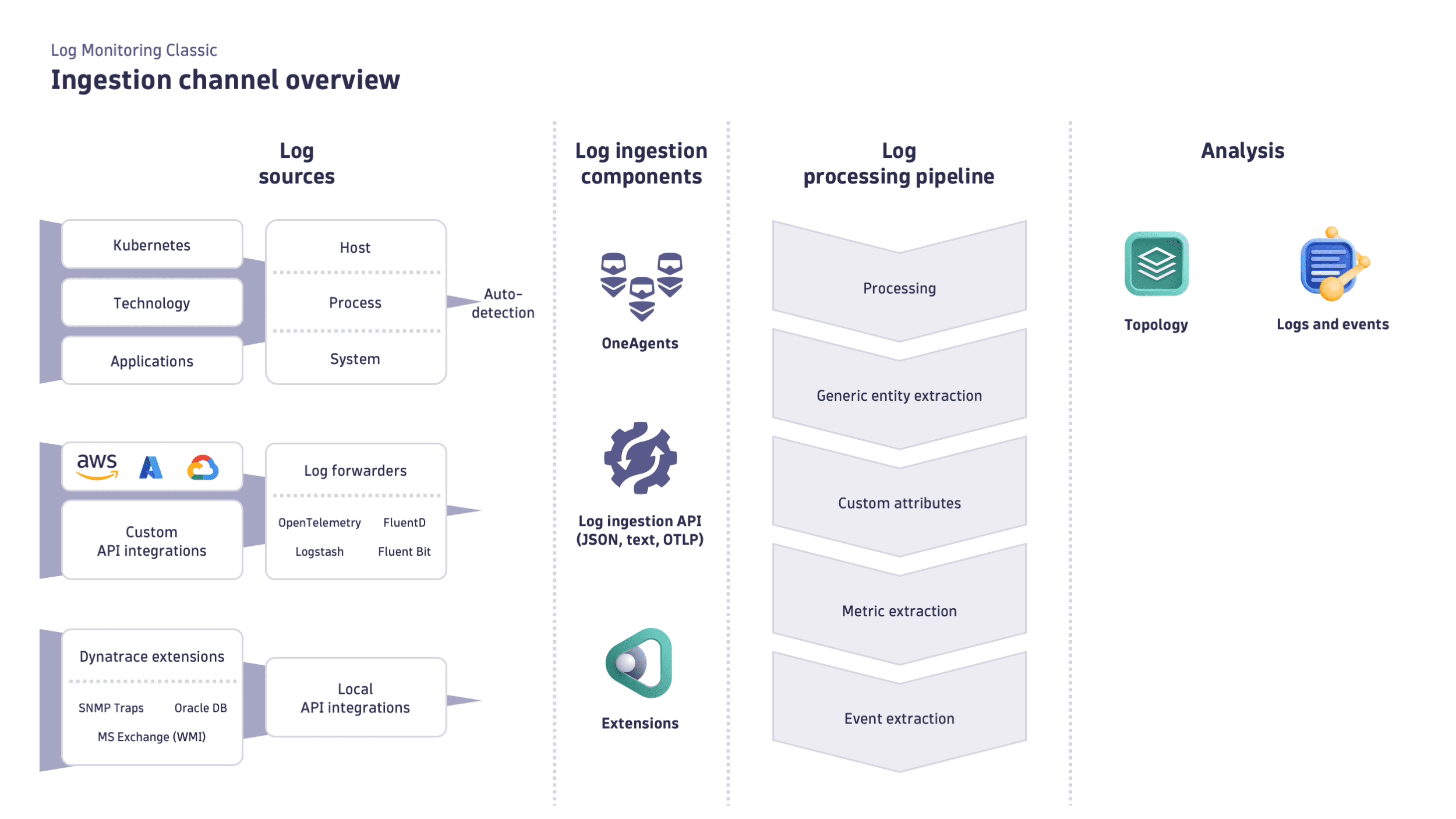

Ingest & Processing

Set up automatic log collection, and extract value with Log Processing.

Analysis

Analyze significant log events across multiple logs, across parts of the environment (production), and potentially over a longer timeframe.

Alerting

Define patterns, events, and custom log metrics to receive proactive notifications.

API

Use the Dynatrace API to send log data to Dynatrace and quickly search, aggregate, or export the log content.

Configuration

Get the latest Log Monitoring

Tweak your setup

Default limits

Supported log data format

Connect log data to traces

Log storage configuration

Log Monitoring FAQ

Log data acquisition

Autodiscovery

Add log files manually

Generic log ingestion

Cloud log forwarding

Logs from Kubernetes

Add log sources

Log processing

Log data analysis

Log viewer

Log events

Log metrics

Log custom attributes

Management zones

The previous version, Dynatrace Log Monitoring v1, is a legacy solution.

We strongly encourage you to switch to the latest Dynatrace Log Monitoring version.

Troubleshooting

If your ingested logs don’t look as expected, you can check if a particular log record contains warnings regarding issues that occurred for that log in the log ingest and processing pipeline. Look for a dt.ingest.warnings attribute in the log viewer. It contains a list of warnings about problems that affected a particular log record.

Examples of possible warnings:

| Warning | Description |

|---|---|

content_trimmed | The content was trimmed after being received by the API because it exceeded the event content maximum byte size limit. |

content_trimmed_pipe | The content was trimmed after processing rules were applied because it exceeded the event content maximum byte size limit. |

attr_count_trimmed | The number of attributes was trimmed after being received by the API because it exceeded the maximum number of attributes in a single event. |

attr_count_trimmed_pipe | The number of attributes was trimmed after processing rules were applied because it exceeded the maximum number of attributes in a single event. |

attr_val_count_trimmed | At least one multi-value attribute had the number of values trimmed, after being received by the API, because it exceeded the maximum number of attributes in a single event. |

attr_val_count_trimmed_pipe | After applying processing rules, at least one multi-value attribute had its value number trimmed because it exceeded the maximum number of attributes. |

attr_val_size_trimmed | At least one attribute value size was trimmed after being received by the API because it exceeded the value maximum byte size limit. |

attr_val_size_trimmed_pipe | At least one attribute value size was trimmed after processing rules were applied because it exceeded the value maximum byte size limit. |

timestamp_corrected | The timestamp was too far in the future and was corrected to the current time. |

common_attr_corrected | At least one of the following attributes was corrected: |

processing_batch_timeout | Batch timeout occurred during log processing rules execution. |

processing_transformer_timeout | Execution timeout occurred in one of the processing transformers while executing log processing rules. |

processing_transformer_error | Execution error occurred in one of the processing transformers while executing log processing rules. |

processing_transformer_throttled | Execution throttled in one of the processing transformers while executing log processing rules. |

processing_output_record_conversion_error | Output conversion error occurred for some records while executing log processing rules. |

processing_prepare_input_error | “Prepare input error” occurred in one of the enabled log processing rules. |